Phase 5 gave the custom hosting control panel build the ability to provision the underlying account layer safely.

Linux users could be created, home directories could be built, and the application finally had a clean bridge into controlled system-level operations.

This phase builds directly on Phase 5, where the provisioning engine and system integration layer were introduced.

Phase 6 is where that groundwork stopped being theoretical and started becoming genuinely useful.

This phase pushed the panel into the live web stack itself.

Instead of stopping at account creation, the system now provisions the pieces required to actually host a website: Nginx vhosts, PHP-FPM pools, document root alignment, configuration validation, and safe service reloads.

In other words, this is the phase where a site stopped being just a database record and started becoming something the server could actually serve.

⚙️ Web Stack Provisioning in the Custom Hosting Control Panel Build

The goal of this phase was straightforward, even if the implementation was not.

When a site is created through the panel, the system now carries that process through the web stack layer as well.

That means generating the correct Nginx virtual host, preparing PHP-FPM to run under the correct account context, linking the domain to the right document root, validating configuration before anything gets reloaded, and rolling back cleanly if something goes wrong.

This moved the KR0311 Control Panel beyond internal provisioning and into real hosting orchestration.

- Nginx vhost configs are generated dynamically

- PHP-FPM pools are created for hosted accounts

- Document roots are aligned to the correct site path

- Config validation happens before reloads

- Rollback logic prevents bad configs from taking the stack down

So yes — the panel is now doing real hosting work.

Which is exactly when you find out whether your architecture was solid or just well-dressed optimism.

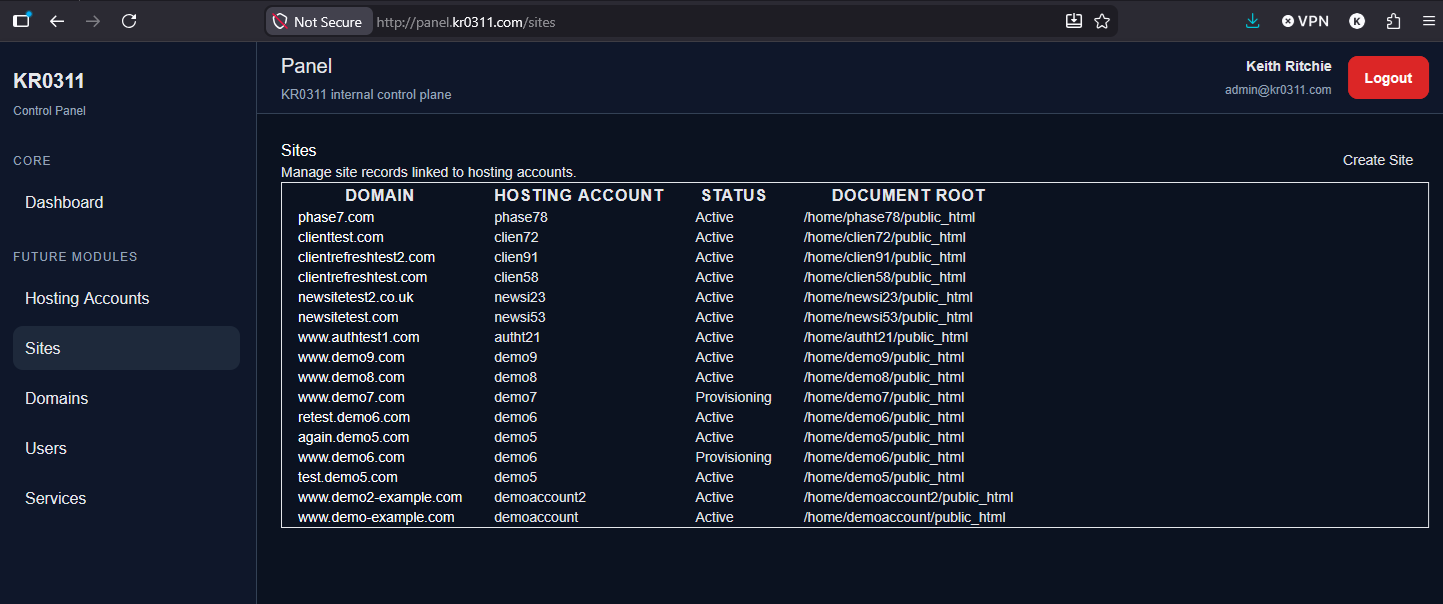

At this point, the panel is no longer just storing site records — it is actively managing live hosting configurations, as shown in the sites panel after provisioning.

🌐 Nginx Provisioning

🧩 Turning Site Records Into Real Vhosts

Phase 6 introduced dynamic Nginx vhost generation as part of site provisioning.

Instead of manually writing server blocks every time a new domain was added, the panel now creates them automatically from the application layer using the provisioning system introduced in Phase 5.

Generated configs are written into the expected Nginx structure:

- /etc/nginx/sites-available/

- /etc/nginx/sites-enabled/

That keeps the resulting layout familiar, maintainable, and consistent with standard Nginx hosting workflows.

The implementation also follows the same general principles described in the official Nginx documentation, which made it easier to keep the generated structure predictable rather than inventing something clever and regrettable.

✅ Validation Before Reload

This phase did not take the reckless route of writing config and hoping for the best.

Every generated Nginx configuration is validated before reload.

The provisioning flow writes the vhost file, creates the enablement symlink, runs nginx -t, and only proceeds with a reload if the configuration passes.

If validation fails, the panel rolls the change back instead of leaving the server in a broken state.

That sounds obvious on paper, but it is the difference between a provisioning system and a chaos generator.

↩️ Rollback on Failure

A failed config does not just stop the process. It triggers cleanup.

Bad vhost files and broken enablement links are removed, the failure is logged properly, and the related site status is marked accordingly.

That ensures the application state and the system state stay aligned, which becomes more important with every new moving part added to the panel.

🐘 PHP-FPM Integration

👤 Dedicated Execution Context Per Hosting Account

Phase 6 also extended provisioning into PHP-FPM.

When a site is created, the system now provisions a PHP-FPM pool tied to the hosting account user.

Pool config files are generated into the standard PHP-FPM pool directory under /etc/php/8.3/fpm/pool.d/, and the pools run under the hosting account context rather than a generic shared execution model.

That approach keeps the design aligned with the rest of the project, where hosting accounts already map cleanly to their own Linux user and home structure.

It also lines up with the behaviour described in the PHP-FPM documentation, which helped keep the pool structure grounded in something sensible rather than home-grown chaos.

⚖️ Why Per-Account Pools Made Sense

One of the decisions in this phase was whether PHP-FPM should be split per site or per hosting account.

The chosen direction was the more practical one for this stage of the project: a dedicated pool per hosting account.

That gave the panel strong enough isolation for the current architecture without introducing unnecessary overhead or turning the server into a graveyard of hyper-fragmented pool definitions.

It matched the account-based hosting model already in place, kept provisioning cleaner, and made the execution structure easier to reason about.

For this version of the panel, that was the right balance between isolation, maintainability, and scalability.

✅ Validation Before Reload

Just like Nginx, PHP-FPM provisioning follows a validation-first pattern.

The pool file is rendered and written, the PHP-FPM configuration is tested, and only then is the service reloaded.

If the validation fails, the panel removes the bad pool config and marks the provisioning attempt as failed.

No blind reloads. No wishful thinking. No “it’ll probably be fine.”

🧠 Service Architecture

🔧 Extending the Phase 5 Pattern

Phase 6 did not throw away the architecture established in Phase 5.

It extended it.

The provisioning model remained service-led, with the application layer orchestrating the workflow and the system layer performing tightly controlled actions through safe command execution.

New services introduced in this phase included:

- SiteProvisioningService

- NginxProvisioningService

- PHP-FPM Provisioning Service or equivalent

These services sit on top of the existing SystemCommandService, which remains responsible for command execution via Symfony Process.

The controller layer still does not directly run system commands.

That separation remains one of the most important architectural rules in the entire project.

🧱 Keeping the Boundaries Clean

The panel continues to follow a clear split between responsibilities.

- Controllers handle request flow and persistence entry points

- Jobs handle asynchronous provisioning execution

- Services orchestrate system work

- SystemCommandService executes privileged commands safely

That structure keeps the codebase easier to reason about and makes the provisioning workflow far safer than stuffing server operations directly into request handlers and hoping the app survives its own enthusiasm.

🚨 Async Provisioning: The Critical Fix

💥 Why the Original Approach Failed

This was the most important correction in Phase 6.

Originally, site provisioning was triggered synchronously inside the controller request lifecycle.

On the surface, that seemed convenient. The request came in, the site was created, the system changes ran, and everything happened in one go.

In practice, it was a terrible idea.

Phase 6 provisioning performs live changes against the same web stack that serves the panel itself.

That includes:

- PHP-FPM pool creation

- PHP-FPM reloads

- Nginx vhost creation

- Nginx validation

- Nginx reloads

Trying to do all of that synchronously during the request led to intermittent 502 Bad Gateway errors and general panel instability.

Which, to be fair, is a pretty solid sign that the application does not enjoy hot-swapping the floor beneath its own feet.

✅ The Correct Architecture

The fix was to move provisioning out of the request cycle entirely.

That decision fundamentally changed the Phase 6 architecture for the better.

The final request flow now works like this:

- Controller creates the site record

- Controller dispatches a ProvisionSite job

- The UI returns immediately

- The queue worker processes provisioning asynchronously

- Provisioning services perform the live system work

This is not just a bug fix.

It is a production-grade architectural decision.

Anything that modifies live services now happens outside the request thread, which protects the application from self-inflicted downtime and gives the provisioning layer a much safer execution model.

🔄 Queue Worker and Operational Stability

Once provisioning moved into jobs, queue processing became part of the actual control plane architecture rather than a nice extra.

The project now uses a Redis-backed queue, with a dedicated systemd-managed worker service to ensure provisioning continues across reboots and does not depend on someone leaving a terminal session open all day.

That was an important operational maturity step.

A queue-driven provisioning system without a persistent worker is just a fancy way of creating stuck records.

🛡️ Safe Reload Strategy

🌐 Nginx

The Nginx flow is intentionally strict:

- Write config

- Enable site

- Run nginx -t

- If valid, reload

- If invalid, roll back

This ensures a broken vhost never gets promoted into the live stack unchecked.

🐘 PHP-FPM

The PHP-FPM flow follows the same philosophy:

- Write pool config

- Validate PHP-FPM configuration

- If valid, reload

- If invalid, remove the bad config and fail cleanly

The key theme of Phase 6 was that service reloads are now treated as controlled operations, not blind side effects.

📊 Status System for Sites

Sites now have a proper provisioning lifecycle tied to real system outcomes.

The status values used in Phase 6 are:

- provisioning

- active

- failed

A newly created site enters the provisioning state first.

If provisioning completes successfully, it becomes active. If the system work fails, it becomes failed.

This matters because the panel is no longer dealing with hypothetical site entries.

It is now managing live infrastructure state, and the application needs to reflect that honestly.

🧾 Logging and Visibility

The dedicated provisioning log introduced in Phase 5 was expanded in this phase to provide much better visibility into what the system is doing.

storage/logs/provisioning.log now captures far more than just account-level actions.

Phase 6 logging includes:

- Nginx config generation

- PHP-FPM pool creation

- validation results

- reload attempts

- failures and rollback actions

That visibility became especially important once provisioning moved into asynchronous jobs.

When work is happening outside the request lifecycle, good logging stops being helpful and starts being essential.

⚠️ Error Handling

Phase 6 kept the same design principle established earlier in the build: system failures must not turn into ugly application failures.

If provisioning hits a problem, the panel does not dump raw system output into the UI and it does not implode because a config test failed somewhere under the hood.

Instead, the failure is logged properly, rollback happens where required, the site status is updated, and the user-facing experience stays controlled and readable.

That kind of behaviour tends to feel boring when it works, which is exactly the point.

🧠 Challenges and Decisions

🔄 Sync vs Async

The biggest challenge in this phase was not generating config files.

It was respecting the reality of what happens when an application starts reloading the same services that keep it alive.

The move from synchronous provisioning to queued jobs was the defining technical decision of the phase.

It solved the immediate instability problem, but more importantly, it established the correct long-term pattern for any future feature that touches live services.

🚫 Preventing Downtime

Another major focus was preventing bad configuration from affecting the active stack.

That meant validating before reload, rolling back on failure, and treating config generation as something that could go wrong in very real ways rather than something to assume will always behave nicely.

🏗️ Architecture Evolution

Phase 6 also sharpened the architecture itself.

What started in earlier phases as sensible separation between controllers and services has now become a much more serious application design rule.

The panel is no longer just storing records and rendering pages. It is controlling server behaviour.

That raises the bar for how disciplined the code needs to be.

🎨 UI Refinement

Although Phase 6 was primarily a backend and infrastructure phase, the panel continued evolving on the usability side as well.

The interface remained consistent with the existing KR0311 panel structure:

- @extends(‘layouts.panel’)

- view(‘dashboard.index’)

- .nav-link sidebar system

- public/css/panel.css

- no Tailwind

- no inline styles

This phase was not about redesigning the panel.

It was about refining the flow around accounts, sites, and provisioning so the experience becomes more intuitive as the system grows.

The structure continues moving in a more cPanel-style direction, with clearer separation between account-level ownership and site-level actions, cleaner navigation paths, and more obvious progression from data entry to real provisioning outcomes.

Not flashy. Just increasingly usable, which is usually the better trade.

🔥 Why This Phase Matters

Phase 6 is a major milestone because it is the point where the control panel stopped being a structured admin app and started acting like an actual hosting platform.

The system now controls the web stack directly.

Sites are no longer just stored in the database.

They are now capable of becoming live, server-backed web properties with Nginx and PHP-FPM provisioned through the panel itself.

That is a substantial leap in capability.

More importantly, it was achieved without throwing away the safety rules established in earlier phases.

The result is not just more power, but more power handled properly.

🔜 What’s Next in Phase 7

With web stack provisioning in place, the next logical step is to move into the domain readiness and certificate layer.

Phase 7 will build on this foundation with work around:

- SSL provisioning

- DNS-aware validation

- domain readiness checks

That will bring the panel closer to handling the full site activation lifecycle rather than just the local server-side portion of it.

🏁 Final Thoughts

Phase 6 is one of those phases that looks tidy in a summary and felt considerably less tidy while building it.

Under the surface, this was a meaningful shift in how the KR0311 Control Panel behaves.

It now provisions the live web stack, handles validation and rollback properly, uses asynchronous jobs to avoid destabilising itself, and keeps the application layer clean while doing it.

That is a big step forward.

It also shows why the earlier phases mattered.

The service boundaries, status model, logging strategy, and clean panel structure were not busywork.

They were groundwork.

Phase 6 is where that groundwork started paying rent.

The panel is still early in the journey, but at this point it is no longer pretending to be a hosting control panel.

It is becoming one.